Resizing a snapshot to fit on a smaller server type

Growing a server from a smaller server type to a larger one is easy. You just snapshot the server and build a newer, larger one from the snapshot and you’re ready to go. Most of our images auto-grow the filesystem on boot when it notices a larger disk.

Shrinking down from a larger server type to a smaller one is a little more involved, as the snapshot is too big to fit on the smaller disk. So you need to modify the image a bit to shrink it down, so that it’ll fit.

Image sizes

There are two sizes associated with server snapshots and other images, the virtual size and the data size. The virtual size, is how big the image looks to the server, which is dependent on the server type you first used.

The data size is how much actual non-zero data is stored in the image. So if you have an image with a virtual size of 20GB, but you only put 5GB of files on it then the data size might just be 5GB. If you downloaded the image, you’d get a 5GB file, not a 20GB file.

It’s not quite as simple as this, as modern filesystems shuffled data around the disk and don’t always zero it, so you might have only 5GB of data on the filesystem but the data size might be more than this, but it’ll never be more than the virtual size.

So, to fit an image with a large virtual size onto a server type with a smaller disk, we need to shrink down the virtual size. To do this, we need to shrink down the filesystem and then shrink down the partition that contains it.

Then we can re-register the new, smaller, image and build new, smaller, servers with it.

Build a processing server

Since we’ll be downloading large images and processing them, it’s best to do this work on a server on Brightbox, so your Internet connection won’t slow you down.

So build a server that’s big enough to download your snapshot to - you’ll actually need to store at least 2 copies of the image, so make it big enough for that. You’ll only need it for a few minutes so it won’t cost much to run.

Use a recent official Ubuntu image, Bionic should be fine. Map a Cloud IP if you need to, SSH in and install some required tools:

$ sudo apt-get update

$ sudo apt-get install qemu-utils kpartx parted liblz4-tool gdiskDownload the snapshot

We need a local copy of the snapshot to work on. Snapshots are stored in the images container of Orbit, our storage service. You can access the images using SFTP, or using the OpenStack Swift command client, or simply by using a temporary url generated in Brightbox Manager. SFTP is a little slower than accessing Orbit directly, so we’ll access it directly here:

$ wget -O img-3anq3 "https://orbit.brightbox.com/v1/acc-xxxxx/images/img-3anq3?temp_url_sig=7725f88959e1017&temp_url_expires=1421876813"

2015-01-21 21:31:35 (75.2 MB/s) - 'img-3anq3' savedDecompress the snapshot

The downloaded snapshot will be in LZ4 compressed raw format, so decompress it using the lz4 utility:

$ lz4 -d img-3anq3 img-3anq3.rawThis will create a file called img-3anq3.raw which is sparsely allocated, so it will look to be the full virtual size of the image but will only be the size of the actual image data.

You can delete the original image file now if you need the space, we won’t need it again (unless you make a mistake!).

Map the partitions from the image file

So now we have a raw image file on disk. It doesn’t contain the filesystem directly but instead has a partition table. That means we can’t access the filesystem directly and instead have to use the kpartx tool to setup devices that point to the partitions inside this file.

$ sudo kpartx -av img-3anq3.raw

add map loop0p1 (253:0): 0 125601759 linear 7:0 227328

add map loop0p14 (253:1): 0 8192 linear 7:0 2048

add map loop0p15 (253:2): 0 217088 linear 7:0 10240The number of partitions contained in the file, thus the number of loop devices created by kpartx, will depend on the partition layout of the original server. Most of our official images contain just a single partition but some newer Ubuntu images include additional partitions to facilitate UEFI booting, for example.

If there’s more than one partition, use the output of kpartx to determine the largest one (6th column is size in blocks), which is most likely to be at the end of the disk and the one we want to resize.

So now we have a device named /dev/mapper/loop0p1 which is mapped the last partition inside the img-3anq3.raw file.

Resize the filesystem

We can now access the filesystem directly, so we can use normal filesystem tools.

So now we need to shrink down the filesystem to a reasonable size. Our smallest server type (512MB SSD) has a 15GB disk, so if you’re targeting that we’ll need to go at least that small. Ideally, go as small as you can - you can grow it back up to the new size once you’ve built your new server (and most of our Linux images, particularly the Ubuntu images, will grow it for you automatically on boot).

First, run a filesystem check on it. Most of our images use ext4, but if you’ve got something custom then use the appropriate tools:

$ sudo e2fsck -fy /dev/mapper/loop0p1

e2fsck 1.42.10 (18-May-2014)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

cloudimg-rootfs: 200564/2621440 files (0.1% non-contiguous), 693124/10485504 blocksIf you snapshotted a running server, there might be one or two minor filesystem fixes made but it shouldn’t be a concern.

Now resize the filesystem - we’ll go with 5GB in this example (if you want to make the filesystem as small as possible automatically, replace the size argument with -M):

$ sudo resize2fs /dev/mapper/loop0p1 5120M

resize2fs 1.42.10 (18-May-2014)

Resizing the filesystem on /dev/mapper/loop0p1 to 1310720 (4k) blocks.

The filesystem on /dev/mapper/loop0p1 is now 1310720 blocks long.Make a note of the new filesystem length and the block size; we’ll need this information to resize the partition in a moment.

We’re done with the filesystem now, so we can remove the devices that were setup by kpartx:

$ sudo kpartx -d img-3anq3.raw

loop deleted : /dev/loop0Resize the partition

We’ve shrunk down the filesystem so now we can shrink down the partition that contains it, using parted.

When resizing, you tell parted where you want the partition to end rather than how big you want the partition. A bit perplexing, but there we are. To determine the new end position, we need to take the current start position and add the new size of the filesystem after shrinking.

First, open parted and print the current partition table layout in bytes:

$ parted img-3anq3.raw

(parted) unit B

(parted) p

Model: (file)

Disk /home/ubuntu/img-3anq3.raw: 64424509440B

Sector size (logical/physical): 512B/512B

Partition Table: gpt

Disk Flags:

Number Start End Size File system Name Flags

14 1048576B 5242879B 4194304B bios_grub

15 5242880B 116391935B 111149056B fat32 boot, esp

1 116391936B 64424492543B 64308100608B ext4Then, identify the relevant partition (in this case 1) and make a note of the start position (in this case 116391936 bytes). Now take the filesystem length and blocksize you recorded earlier, multiply them together and add the start position. e.g.

New end position = (1310720 * 4096) + 116391936 = 5485101056

Resize the partition using the calculated end position:

(parted) resizepart 1 5485101056B

Warning: Shrinking a partition can cause data loss, are you sure you want to continue?

Yes/No? Yes

(parted) quit

Resize the image

So now that the filesystem is shrunk, and the partition is shrunk, the only thing left is the actual “disk”, which for a raw image file is just the file size. qemu-img can help us here.

Shrink the image down to at least 34 x 512 bytes larger than the partition end position you calculated previously (33 x 512-byte sectors are required for the secondary GPT header, if necessary):

New size = 5485101056 + (34 * 512) = 5485118464

$ qemu-img resize -f raw --shrink img-3anq3.raw 5485118464

Image resized.Fix the secondary GPT header

If your disk contains a GPT partition table (rather than MBR), shrinking the disk will have clobbered the secondary GPT header, which is stored at the end of the disk. We can use the sgdisk utility to regenerate the secondary header at the correct location:

$ if `gdisk -l img-3anq3.raw | grep -q "GPT: damaged"`; then sgdisk -e img-3anq3.raw; fi

Caution: invalid backup GPT header, but valid main header; regenerating

backup header from main header.

Warning! Error 25 reading partition table for CRC check!

Warning! One or more CRCs don't match. You should repair the disk!

Warning! Disk size is smaller than the main header indicates! Loading

secondary header from the last sector of the disk! You should use 'v' to

verify disk integrity, and perhaps options on the experts' menu to repair

the disk.

Caution: invalid backup GPT header, but valid main header; regenerating

backup header from main header.

Warning! Error 25 reading partition table for CRC check!

Warning! One or more CRCs don't match. You should repair the disk!

****************************************************************************

Caution: Found protective or hybrid MBR and corrupt GPT. Using GPT, but disk

verification and recovery are STRONGLY recommended.

****************************************************************************

Warning: The kernel is still using the old partition table.

The new table will be used at the next reboot or after you

run partprobe(8) or kpartx(8)

The operation has completed successfully.Recompress the image with LZ4

Now we have a resized raw image, so we just need to compress it again with LZ4:

$ lz4 img-3anq3.raw img-3anq3.lz4Register the newly resized image

Now we’ve modified the image to the size we want it, we need to register it back with the Image Library so we can build new servers from it.

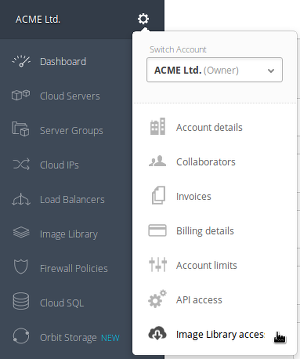

Firstly, upload the new image to an Orbit container and generate an authenticated temporary url. Then register it using the command line interface. There is a fuller guide to image registration available.

Summary

Modifying filesystems and partitions is fiddly and this isn’t a simple procedure, but it results in an image you can use with any server type and at no point do you put your original data at risk, as all operations are done on a whole new image. So you can experiment without any risk and you can always go back to your original snapshot at any time.